You give it files. It figures out what each one is about, then places them in a 3D space accordingly.

On-device processing

MobileCLIP runs locally on your Mac's neural engine. Your files never touch a server. No accounts, works offline.

Mix any file type

Drop images, text files, source code, and PDFs into the same space. The model understands all of them and places them relative to each other.

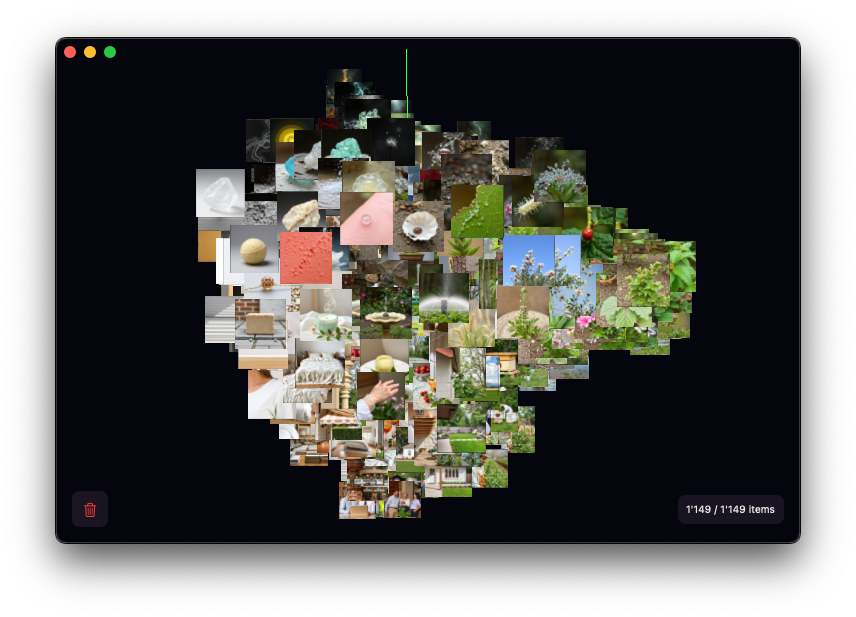

3D visualization

Embeddings are reduced to 3D and rendered with Metal. Rotate, zoom, pan around. Handles hundreds of items without breaking a sweat.

Drag and drop

Drop files onto the window. They show up in the visualization as embeddings are computed. No import wizard, no project setup.

Native macOS

SwiftUI app. Small download, opens fast. Looks and feels like the rest of your Mac.

Semantic clustering

Files about similar things end up near each other. Useful for spotting patterns you wouldn't notice otherwise, or just getting a feel for what's in a folder.